Dating app Bumble censors lewd creeps with new tool

The popular online dating platform will add a new feature to censor inappropriate content.

Bumble wants you to keep it in your pants while using its dating app.

The popular online dating platform is rolling out in June a tool called the Private Detector, which is designed to censor out any inappropriate content. The new feature will be available for Bumble as well as other dating apps and social networks owned by the group, including Badoo, Chappy and Lumen.

TINDER LAUNCHES 'SPRING BREAK MODE' TO CONNECT WITH MATCHES BEFORE VACATIONS

The artificial intelligence tool reportedly has a 98 percent accuracy rate in flagging restricted content, including guns and nudity.

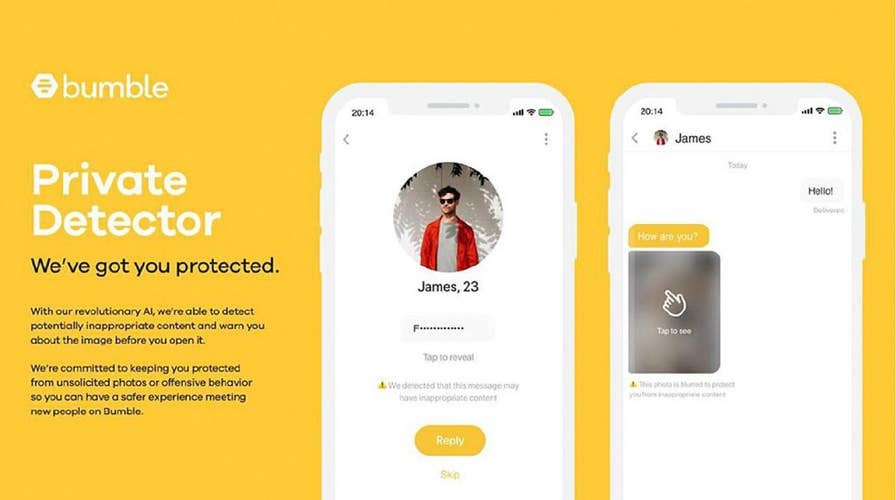

After it rolls out, the Private Detector will automatically blur any images suspected of being lewd. Those who receive the images will then be able to choose whether to view them, block the image or report the offender to moderators of the app. (Courtesy of Bumble)

After it rolls out, the Private Detector will automatically blur any images suspected of being lewd. Those who receive the images will then be able to choose whether to view them, block the image, or report the offender to moderators of the app.

Andrey Andreev, founder of the group of dating apps that includes Bumble, said in a press release that the AI tool is an example of the brand’s commitment to keeping its users safe.

CLICK HERE TO GET THE FOX NEWS APP

“The sharing of lewd images is a global issue of critical importance and it falls upon all of us in the social media and social networking worlds to lead by example and to refuse to tolerate inappropriate behavior on our platforms,” Andreev said.

Bumble CEO Whitney Wolfe Herd, also the founder of the female-focused dating app, is manning another effort to develop a bill to make the online sharing of lewd photos a punishable crime.

World Herd has been working with Texas state lawmakers on the bill, which would hold those accountable of sharing inappropriate images. According to the press release, the bill unanimously passed the Committee on Criminal Jurisprudence and will be debated on the floor of the Texas House of Representatives.

FOLLOW US ON FACEBOOK FOR MORE FOX LIFESTYLE NEWS

“The digital world can be a very unsafe place overrun with lewd, hateful and inappropriate behavior. There’s limited accountability, making it difficult to deter people from engaging in poor behavior,” added Wolfe Herd. “I really admire the work Andrey has done for the safety and security of millions of people online and we, along with our teams, want to be a part of the solution.”