A fired Google engineer who published a memo about diversity is threatening to sue the tech giant. (Reuters)

Google, which already uses artificial intelligence to power its search, video and news offerings, unveiled three new AI-enhanced offerings at an event commemorating its twentieth anniversary on Monday.

The tech giant debuted three features designed to make visual content more appealing and useful for its users: auto-generated “immersive” content, video previews and better image searching.

“When Search first began, our results were just plain text,” said Cathy Edwards, director of engineering at Google Images, in a blog post. “Today, we’re introducing three fundamental shifts in how we think about Search, including a range of new features that use AI to make your search experience more visual and enjoyable.”

TECH GIANTS USE AI TO FIGHT FAMINE IN COORDINATION WITH INTERNATIONAL GROUPS

The first change has to do with AMP Stories, an “open source library” that allows publishers (and anyone else) to create web-based flipbooks with lots of bells and whistles — and was originally created to help publishers increase their page load speed. These visual stories will now surface in Google Images and Discover, the company said.

(Google)

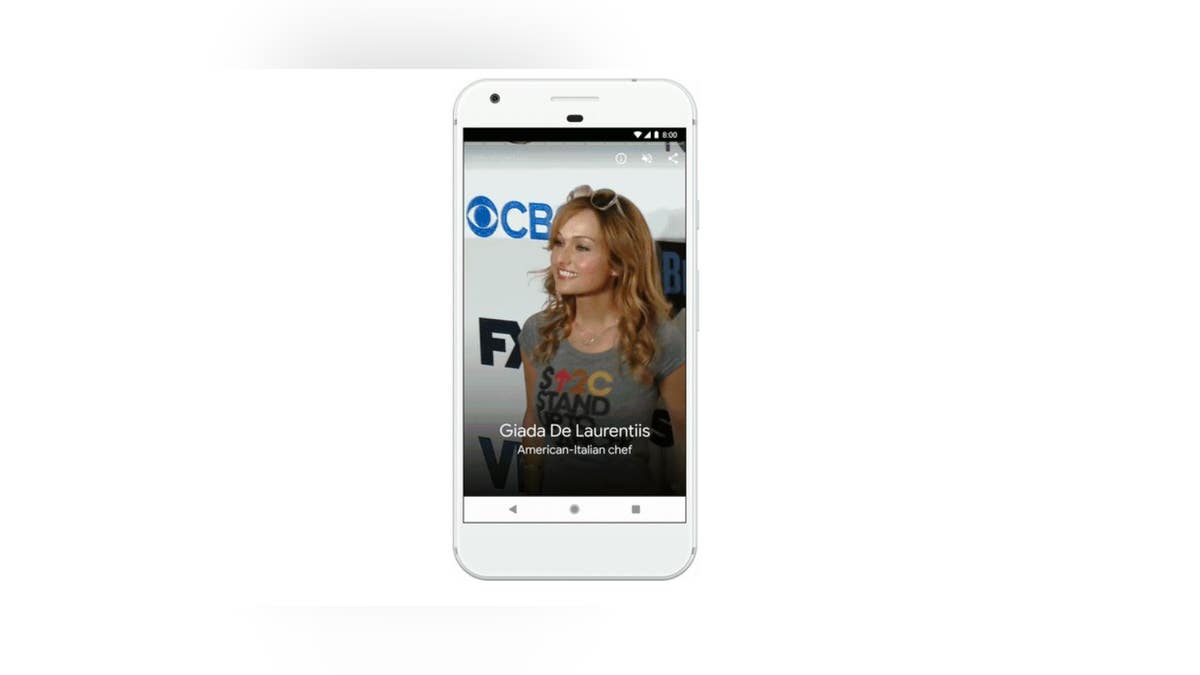

“We’re starting today with stories about notable people—like celebrities and athletes—providing a glimpse into facts and important moments from their lives in a rich, visual format. This format lets you easily tap to the articles for more information and provides a new way to discover content from the web,” Edwards explained in the blog post.

In terms of videos, Google is using “computer vision” to grasp the content of videos and highlight them in Search. The search giant labels them Featured Videos, and they will link to subtopics of searches in addition to first-tier content.

“For Zion National Park, you might see a video for each attraction, like Angels Landing or the Narrows,” Edwards wrote. “This provides a more holistic view of the video content available for a topic, and opens up new paths to discover more.”

GOOGLE: APPS CAN SCAN AND SHARE YOUR GMAIL DATA, WITH CONSENT

The algorithm for Google Images has been overhauled, as well, with a greater emphasis on web page authority and the freshness of content, according to the blog post. Google will also begin to show more context with images, including captions that display the title of the web page where each image is published—and they’ll suggest related search terms for more guidance.

Lastly, they are bringing Google Lens to Google Images, which analyzes images and finds objects of interest within them. If you select an object, Lens will show you relevant images that could then link to a product page. Lens also allows users to “draw” on part of an image to gather more search results.