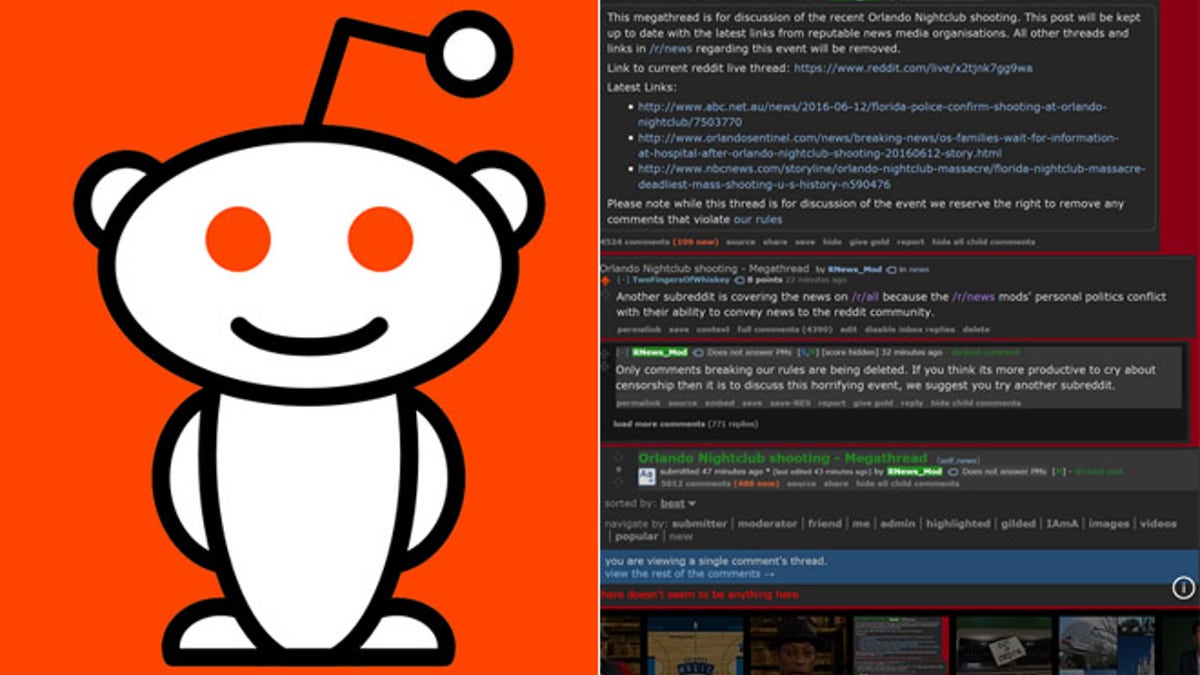

(Screenshot from www.reddit.com)

Reddit users are seeing red over this one.

After the June 12 Orlando massacre by an avowed Islamic radical terrorist, Reddit users started commenting on the news and stating their opinions. Some comments included obvious hate speech that violated the news networking site’s published standards, but many were simply calling out Orlando shooter Omar Mateen as an Islamic extremist. Others were merely suggesting where to donate blood in the aftermath. Many just linked to news reports.

A Reddit moderator deemed entire threads offensive and deleted them in what was seen as an obvious attempt to remove comments that took a certain political viewpoint, especially those that suggested Islamic terrorist attacks are on the rise in the United States.

Users complained about the censorship.

“The vast and overwhelming majority of removed comments were not hate speech,” said one forum user. “All they had in common is that they mentioned radical Islam. There is proof of this fact all over Reddit, and it has been documented by multiple unaffiliated third party websites.”

Related:

- Reddit administrators accused of censorship

- Reddit updates content policy, bans a ‘handful’ of groups that exist ‘solely to annoy’ others

- Facebook denies ‘systematic’ content bias, but admits possibility of rogue employees

- CPAC organizers slam Facebook’s content bias probe, question conference coverage

Last week, Reddit moderators finally admitted the error and explained their actions, blaming the controversy on comment filters and bots that incorrectly flagged hate speech. A Reddit user managed to capture the deleted posts, which are mostly comments about Islamic terrorism.

Whether there was a bot involved, a politically-correct moderator problem, or some other agenda, it’s clear that most of the comments were removed on a site known for its open and free speech. Critics say the site broke its unwritten contract with users who expect the forum to facilitate the free and open exchange of ideas.

Analyst Rob Enderle of the Enderle Group told FoxNews.com the vast majority of the Reddit posts should not have been deleted and is a form of censorship.

“After reading this I would personally never use Reddit again,” Enderle said. “It is a showcase of how not to do moderation and maybe how kill an online service. We will see.”

Danny Paskin, an associate professor of journalism at California State University, told FoxNews.com the problem is with Reddit itself. It has become a mainstream site with 230 million active users per month. Yet, it started as a forum for users to express their views openly. There are codes of conduct and laws about hate speech, but Reddit users still have rights.

“What's being said doesn't fall under any unlawful category, Reddit should not be censoring it,” Paskin said. “Reddit has to make a choice: Does it want to truly be a forum for public exchange of information, or does it want to be a private company running under its own rules?”

A Reddit spokesperson refused to answer questions about policies and only pointed to published code of conduct rules and moderator discussions about the controversy.

There’s a larger issue at work here, however. Social media sites and online forums like Reddit have to decide how to police user comments for hate speech, yet not be seen as being guided by their own political agenda.

Facebook in particular has handled this topic poorly so far.

In May, the social network came under fire following a Gizmodo report that stories about conservative topics were prevented from appearing in Facebook’s trending module.

Facebook said it found no evidence of ‘systematic’ political bias related to its Trending Topics section, but acknowledged the possibility that rogue employees could have impacted the controversial feature.

In June, a Facebook page by political activist Pamela Geller called “Stop Islamization of America” was removed after it was deemed a violation of user policies. Facebook later admitted the page was removed in error and restored it, blaming the problem on incorrectly flagging the page as hate speech. But the damage was done.

“Mark Zuckerberg, under pressure from [German chancellor] Angela Merkel and others, has adopted a policy of censoring news that portrays Muslim migrants in an unfavorable light and reveals the jihadi motivations of the Orlando killer,” Geller told FoxNews.com. “A primary avenue for disseminating politically incorrect information is being closed.”

Facebook released this statement to FoxNews.com: “We aim to find the right balance between giving people a place to express themselves and promoting a welcoming and safe environment. Not all disagreeable or disturbing content violates our Community Standards.”

Enderle says the act of policing comments is largely arbitrary. A robot might have scoured for hate speech and labelled the words “blood” or “Islamic” incorrectly. He says there are three approaches to user comments. Either sites have to allow all comments freely, use a method that is “surgical” and not one-sided or politically motivated, or not allow comments at all.

Erna Alfred Liousas, a Forrester analyst, told FoxNews.com that Reddit in particular has to adhere to a code of conduct and let users know what is and what isn’t allowed. She says if a user base isn’t happy with the policies, they can always find a different forum.

Maybe that’s exactly what will happen with millions of Reddit users.