(Facebook, DeepFace)

Facebook could soon have a facial recognition feature that’s just short of our own abilities. The social network’s researchers have created DeepFace, an algorithm that can identify faces almost as well as humans can.

PHOTOS: Tasty Tech Eye Candy Of The Week

Built by Yaniv Taigman and colleagues at Facebook’s artificial intelligence lab, DeepFace improves on most facial recognition systems’ major flaw: if faces in the photos are off-center, the software struggles to pinpoint a match.

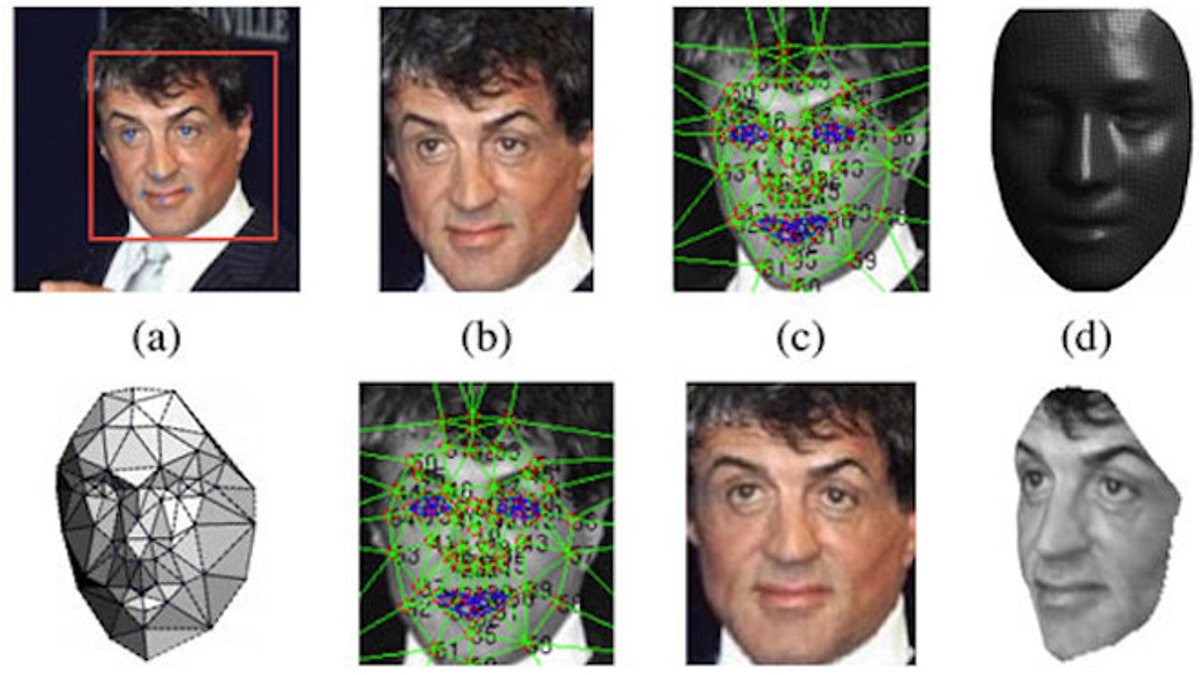

Taigman’s team designed a 3-D model of a face from a photo that can be rotated into the ideal position for the algorithm to find a match. Then, a simulated neural network computes a numerical description of the repositioned face. If DeepFace finds enough similarities between two photos, it detects a match.

“This deep network involves more than 120 million parameters using several locally connected layers without weight sharing, rather than the standard convolutional layers,” researchers explained. “Thus, we trained it on the largest facial dataset to date, an identity- labeled dataset of four million facial images belonging to more than 4,000 identities, where each identity has an average of over a thousand samples.”

BLOG: Solar-Powered Toilet Scorches Poop Into Biochar

The researchers achieved a 97.25 percent accuracy rate for positive matches. That falls just shy of the 97.5 percent accuracy rate humans are capable of achieving. However, DeepFace remains solely a research project. Researchers will present their work at the IEEE Conference on Computer Vision and Pattern Recognition in June.