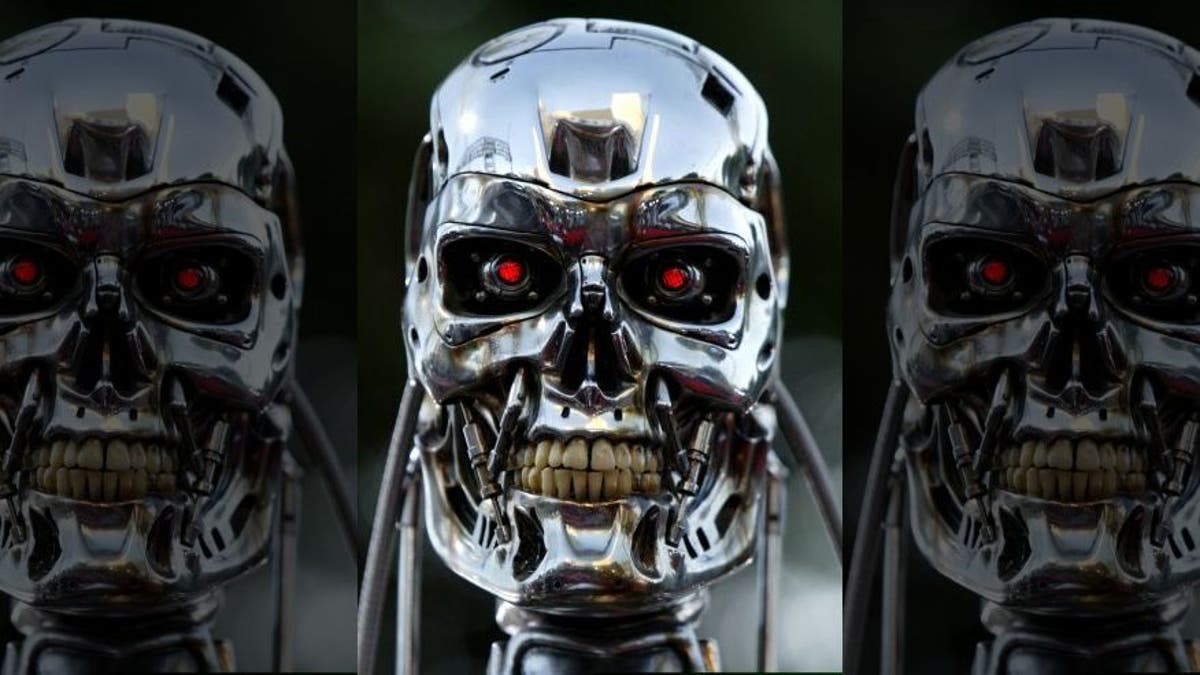

File photo - A robot from the movie is on display for the premier of the motion picture Terminator 3 "Rise of the Machines" June 30, 2003 in west Los Angeles. (REUTERS/Mike Blake)

Fully autonomous weapons would breach international law if used in a theater of war, advocates say, claiming there is a “moral imperative” to ban robots that are programmed to kill.

As the U.S., China and Russia push to become leaders in weapons powered by artificial intelligence, longstanding calls for a ban on killer robots, which experts fear could lead to all-out, highly destructive warfare, have grown.

A new report published by Human Rights Watch and Harvard Law School’s International Human Rights Clinic claims that such autonomous weapons would violate the Martens Clause—a provision of humanitarian law that's widely accepted worldwide.

APPLE COULD LOSE $16B IN WAR TRIGGERED BY NETFLIX

It requires emerging technologies to be judged by the “principles of humanity” and the “dictates of public conscience” when they are not already covered by other treaty provisions.

Specialists from 26 countries, including Tesla CEO Elon Musk and Apple co-founder Steve Wozniak, have called for a ban on fully autonomous weapons.

“Permitting the development and use of killer robots would undermine established moral and legal standards,” Bonnie Docherty, senior arms researcher at Human Rights Watch, which coordinates the Campaign to Stop Killer Robots, told the Guardian. “Countries should work together to preemptively ban these weapons systems before they proliferate around the world.

Docherty continued: “The groundswell of opposition among scientists, faith leaders, tech companies, nongovernmental groups, and ordinary citizens shows that the public understands that killer robots cross a moral threshold. Their concerns, shared by many governments, deserve an immediate response.”

Governments from more than 70 countries are meeting at the United Nations in Geneva on August 27 for the sixth time to discuss the challenges raised by fully autonomous weapons.

A Russian weapons manufacturer displayed a prototype of a killer robot that could pick up and fire weapons. (Kalashnikov Concern)

“The idea of delegating life and death decisions to cold compassionless machines without empathy or understanding cannot comply with the Martens clause and it makes my blood run cold,” Noel Sharkey, a roboticist who wrote about the reality of robot war as far back as 2007, told the British publication.

MIT DEVELOPS WIRELESS SYSTEM TO LET SUBMARINES COMMUNICATE WITH PLANES

“Some states would prefer to shift from a prohibition protocol to one that requires a positive obligation to ensure meaningful human control, and both amount to the same humanitarian law,” he added.

Although fully autonomous weapons do not yet exist, experts believe their usage will be widespread in a matter of years. In addition, the paper reports that at least 381 partly autonomous weapon and military robotics systems have been deployed or are under development in 12 nations, including France, Israel, the U.S. and the U.K.

Russia reportedly opposes the ban of fully autonomous weapon systems, joining various others – including the US – who could seek to block any future negotiations.

Research by the International Data Corporation has suggested that global spending on robotics will double from $91.5 billion in 2016 to $188 billion in 2020.