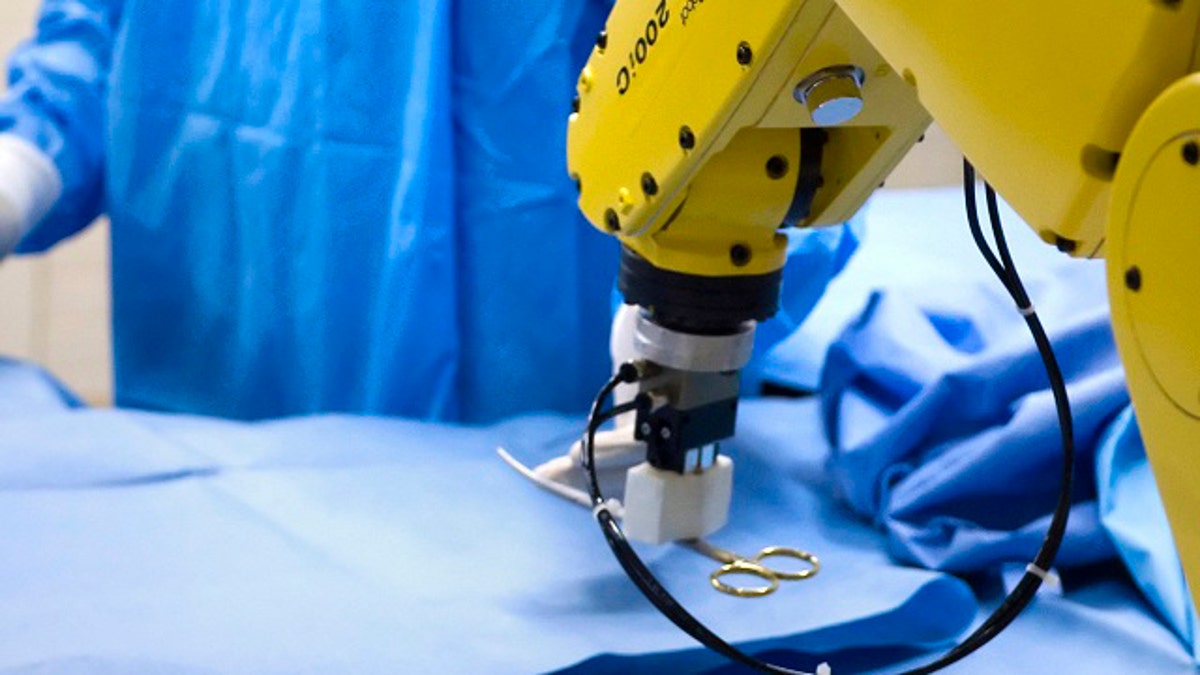

Purdue researchers are developing a system that recognizes hand gestures to control a robot or tell a computer to display medical images of a patient during an operation. (Purdue University photo/Mark Simons)

LAFAYETTE, Ind. – Dr. Daniel Wickert is one of the local obstetrician-gynecologists who uses the da Vinci Surgical System, a $1.5 million robot-assisting surgical device housed at St. Elizabeth East.

While sitting at the system's console, Wickert can maneuver four robotic arms during common gynecological surgeries such as a hysterectomy. "We are now making smaller incisions, which allows people to recover quicker," said Wickert, who works at Lafayette Obstetrics & Gynecology.

Not surprisingly, Wickert is open to more robotic devices assisting in the operating room. "I think we are at the beginning of where robots -- or the advantages of advanced technology -- will take us."

Purdue University researchers are developing a gesture-driven robotic scrub nurse prototype that may one day relieve the nurse of some of her technical duties or replace the scrub technician who is at times responsible for fulfilling those tasks.

The vision is for the robot to assume two tasks that are monotonous but extremely important for a scrub nurse or tech -- passing the instruments to the surgeon and monitoring the number of instruments being used -- but faster than a human. The goal is to help reduce medical errors such as leaving instruments inside a patient's body.

Juan Wachs, an assistant professor of industrial engineering at Purdue, is leading the research team. It includes two industrial engineering doctoral students, Yu-Ting Li and Mithun Jacob. The doctoral duo is responsible for making the robot "intelligent" by creating the needed software.

They are writing the code, performing the experiments, validating the system, summarizing the results and comparing different approaches. Wachs is mentoring, advising and guiding them through the research.

Wachs specializes in machine and computer vision and robotics. The idea for this robotic scrub nurse came about during his machine vision and robotics graduate level class held fall semester.

By October, Li and Jacob were brainstorming ideas for a final project, and the idea of developing a gesture-driven robotic scrub nurse came into inception.

Using natural language, gestures and robotics in the operating room is something Wachs has been working on for the past five years. In 2008, he published research on "Gestix," another vision-based technology that would allow surgeons to search through images in an electronic medical record database by simply moving their hands in front of a large computer screen, as Tom Cruise famously did in the 2002 Steven Spielberg film "Minority Report."

Instead of having to rely on nurses to browse through electronic medical records once an operation starts -- allowing room for error and delays -- the surgeon could browse through records on his own without leaving the sterile field.

Gestix II is in development, Wachs said.

Li and Jacob did not have to build the robot from scratch. Under Wachs's supervision, they took a $32,500 robotic arm purchased from Fanuc Robotics and developed software that would enable it to see hand gestures and respond accordingly. "Right now, the gestures are being recognized at 95 percent accuracy," Jacob said.

The yellow Fanuc arm weighs about 59 pounds and sits in an aluminum and Plexiglas cage, which protects the user from being injured. Wachs said this type of robotic arm is used for education and research purposes. Larger versions are used in car manufacturing plants.

So far, the robot understands five gestures for the following surgical instruments; a scalpel, scissors, retractor, forceps and hemostat. Each gesture uses fingers to denote a number. For example, to receive a pair of scissors the surgeon would have to hold up two fingers in front of the camera.

The arm would then hand him the scissors using a magnetic gripping device that Li and Jacob created.

The quest to give this machine dynamic eyes and ears continues. Jacob is currently working on updating the "seeing" capability of the robot by changing the current robotic eye -- a CCD camera that records similar to a videocamera -- to a Microsoft Kinect camera. This will give the robot depth perception.

The prototype could be fully functional in about five years, but its development depends on future funding, Wachs said. Right now, the development is being funded through Wachs' start-up research package from Purdue.

Wachs has applied for federal funding from the National Institutes of Health and plans on applying to the National Science Foundation. He hopes to attain at least $500,000 in total to cover the next five years of research. Most of the federal funding would be used to compensate the research team. A smaller portion, between $50,000 and $60,000, would be used to purchase a longer and faster robotic arm.

The prototype already has its skeptics. Dr. Ruban Nirmalan, a general surgeon with Indiana University Health Arnett, said a robotic scrub nurse would not only need to be fast and recognize the surgeon's voice and hand gestures flawlessly but be able to "think" and modify its sequence to keep up with a dynamic case.

"If a robot can do the (basic) tasks it may be helpful in some simple, routine operations," he said.

"However, I'm skeptical that we have the technology available now for a robot to accomplish all those tasks. Asking a robot to think for itself, and do it well, will be the biggest hurdle for this device."

Dorothy McClannen, professor of surgical technology and program chairwoman at Ivy Tech Community College, is not concerned that this new technology will replace scrub techs in the operating room.

"I could see (it having) a role in a part of the surgery, but I don't see how it could replace one of the (certified surgical technologists)," she said. "Their job is just way too complex. People think they just pass what the surgeon asks for but it's so much more than that."