Digital safety expert warns of criminals using artificial intelligence to create child pornography

Canopy CMO Yaron Litwin discusses how criminals are using deepfake technology to blackmail teens and generate child pornography.

The rapid advancement of artificial intelligence (AI) programs capable of generating realistic images has led to an explosion in child pornography and blackmail attempts by criminals determined to exploit kids and teenagers.

Yaron Litwin, the CMO and Digital Safety Expert for Canopy, a leading AI solution to combat harmful digital content, told Fox News Digital that pedophiles are leveraging the evolving tools in a variety of ways, often with the intent to produce and distribute images of child sexual exploitation across the internet.

One of these techniques involves editing a genuine photograph of a fully dressed teenager and turning it into a nude image. In one real-world example offered by Litwin, a 15-year-old boy interested in personal fitness joined an online network of gym enthusiasts. One day, he shared a photo including his bare chest following a workout to the group. That image was taken, edited into a nude picture and used to blackmail the teen, who initially thought his photo was harmless and safe in the hands of fellow gym goers.

INSTAGRAM ALGORITHM BOOSTS ‘VAST PEDOPHILE NETWORK,' BOMBSHELL REPORT CLAIMS

Meta filed 27.2 million reports related to child exploitation in 2022, including 21.2 million from Facebook, five million from Instagram and one million from WhatsApp. (iStock)

In 2022, major social media sites reported a 9% increase in suspected child sexual abuse materials on their platforms. Of those reports, 85% came from Meta digital platforms like Facebook, Instagram and WhatsApp.

Meta's head of safety Antigone Davis has previously stated that 98% of dangerous content is removed before anyone reports it to their team and the company reports more child sexual abuse materials (CSAM) than any other service.

Litwin said the process of editing existing images with AI has become incredibly easy and fast, often leading to horrible experiences for families. That ease of use also transfers to the creation of completely fabricated images of child sexual exploitation, which does not rely on authentic pictures.

"These are not real kids," Litwin said. "These are kids that are being generated through AI and as these AI, as the algorithm is receiving more of these images, it can kind of basically improve itself, in a negative way."

According to a recent analysis, AI-generated images of children engaged in sex acts could potentially disrupt the central tracking system that blocks CSAM from the web. In its current form, the system is only designed to detect known images of abuse rather than generated ones. This new variable could lead law enforcement to spend more time determining whether an image is real or generated by AI.

FEARS OF AI HITTING BLACK MARKET STIR CONCERNS OF CRIMINALS EVADING GOVERNMENT REGULATIONS: EXPERT

Child Sexual Abuse Materials (CSAM) are typically filtered out by AI generators. However, criminals are finding new ways to generate the content. (Ljubaphoto/Getty)

Litwin said these images also pose unique questions about what violates state and federal child protection and pornography laws. While law enforcement officers and Justice Department officials affirm that such materials are illegal even if the child in question is AI-generated, no such case has been tried in court.

Furthermore, past legal arguments indicate that such content could be a grey area in U.S. law. For example, the Supreme Court shot down several provisions banning virtual child pornography in 2002, arguing that the ruling was too broad and could even encompass and criminalize depictions of teen sexuality in popular literature.

At the time, Chief Justice William H. made an ominous dissent that predicted many of the ethical concerns of the new AI revolution.

"Congress has a compelling interest in ensuring the ability to enforce prohibitions of actual child pornography, and we should defer to its findings that rapidly advancing technology soon will make it all but impossible to do so," he said.

TEXAS MATH TEACHER SENTENCED TO 20 YEARS FOR DISTRIBUTING CHILD SEXUAL ABUSE MATERIAL

A variety of other factors have exacerbated concerns around online child sexual exploitation. While generative AI tools have been massively beneficial in the creation of new photographs, art pieces and illustrated novels, pedophiles are now using special browsers to engage with forums and share step-by-step guides on how to make new illicit materials with ease. These images are then shared or used to cultivate a fake online persona to converse with kids and earn their trust.

Although most AI programs, such as Stable Diffusion, Midjourney and DALL-E have restrictions on what prompts the system will respond to, Litwin said criminals are beginning to leverage open-source algorithms available on the dark web. In some cases, easily accessible AI programs can also be tricked, using specific wording and associations to bypass established guardrails and respond to potentially nefarious prompts.

According to Litwin, because of the large abundance of generated explicit material, these images are challenging to block and filter, making it easier to lure kids and expose them to harmful content.

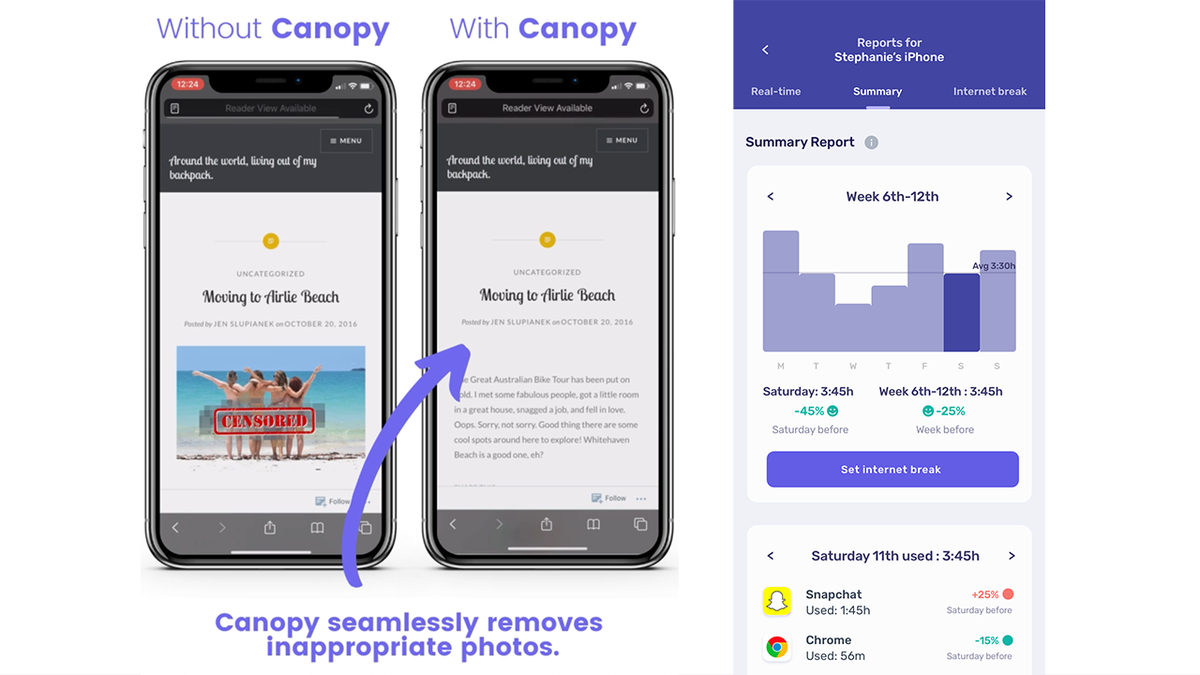

In this demonstration, the Canopy AI solution analyzes images on Google and blocks prohibited content in real-time. (Canopy)

"If I weren't in this industry, I don't know, as a parent, if I would be fully aware of where this could go and what could be done," Litwin added.

However, he noted that while AI can potentially harm children, Canopy was developed, through 14 years of AI algorithm training, to be an example of "AI for good."

Canopy is a digital parenting app that detects and blocks inappropriate content in milliseconds before it reaches a child's computer or phone screen.

"As you're browsing the internet, as you're looking on social media, if an image were to come up, it would filter it out. Most products out there will just block the entire website. Once it identifies a potential image. We can actually just filter it all so you can still browse," Litwin said.

Canopy has the ability to seamlessly remove inappropriate photos from webpages and social media platforms and provides parents with detailed summaries about their child's internet use. (Canopy )

The solution uses advanced computing technology, including AI and machine learning, to recognize and filter out inappropriate content on the web and popular social media apps.

In addition to the Smart Filter, which detects and blocks inappropriate images and videos, Canopy provides sexting alerts that can help detect and prevent inappropriate photos from being shared.

"[A child] might think a child their age is on the other end when it really isn't. And so, we can kind of put that barrier between perhaps a bad decision and letting the parents kind of decide, you know, if they want to allow that or not," Litwin said.

The solution also includes Removal Prevention, which ensures Canopy can't be deleted or disabled without the permission of a parent or guardian.