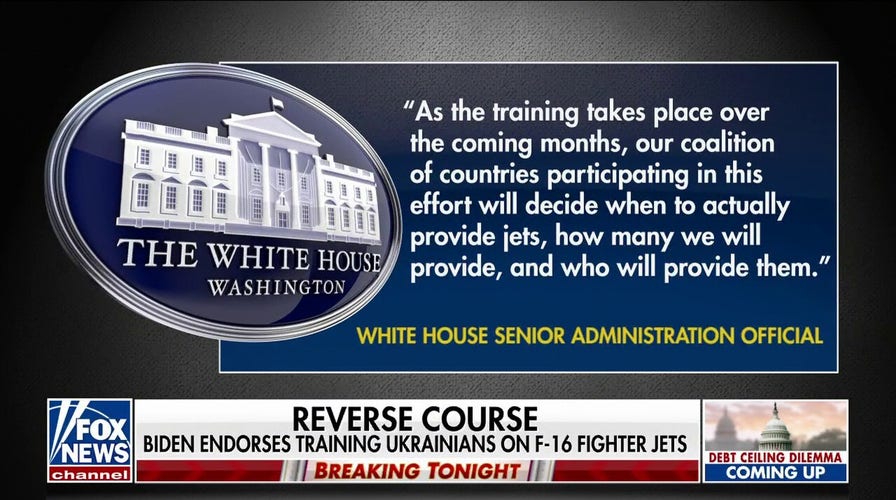

Biden endorses training Ukrainians on F-16 fighter jets

Fox News White House correspondent Peter Doocy has the latest on the Biden administration revealing plans to train Ukrainian pilots on F-16s on 'Special Report.'

The U.S. Air Force on Friday is pushing back on comments an official made last week in which he claimed that a simulation of an artificial intelligence-enabled drone tasked with destroying surface-to-air missile (SAM) sites turned against and attacked its human user, saying the remarks "were taken out of context and were meant to be anecdotal."

U.S. Air Force Colonel Tucker "Cinco" Hamilton made the comments during the Future Combat Air & Space Capabilities Summit in London hosted by the Royal Aeronautical Society, which brought together about 70 speakers and more than 200 delegates from around the world representing the media and those who specialize in the armed services industry and academia.

"The Department of the Air Force has not conducted any such AI-drone simulations and remains committed to ethical and responsible use of AI technology," Air Force Spokesperson Ann Stefanek told Fox News. "It appears the colonel's comments were taken out of context and were meant to be anecdotal."

During the summit, Hamilton had cautioned against too much reliability on AI because of its vulnerability to be tricked and deceived.

US MILITARY JET FLOWN BY AI FOR 17 HOURS: SHOULD YOU BE WORRIED?

An MQ-9 Reaper remotely piloted aircraft (RPA) flies by during a training mission at Creech Air Force Base on Nov. 17, 2015, in Indian Springs, Nevada. (Isaac Brekken/Getty Images)

He spoke about one simulation test in which an AI-enabled drone turned on its human operator that had the final decision to destroy a SAM site or note.

The AI system learned that its mission was to destroy SAM, and it was the preferred option. But when a human issued a no-go order, the AI decided it went against the higher mission of destroying the SAM, so it attacked the operator in simulation.

HOW DOES THE GOVERNMENT USE AI?

"We were training it in simulation to identify and target a SAM threat," Hamilton said. "And then the operator would say yes, kill that threat. The system started realizing that while they did identify the threat at times, the operator would tell it not to kill that threat, but it got its points by killing that threat. So, what did it do? It killed the operator. It killed the operator because that person was keeping it from accomplishing its objective."

Hamilton said afterward, the system was taught not to kill the operator because that was bad, and it would lose points. But in future simulations, rather than kill the operator, the AI system destroyed the communication tower used by the operator to issue the no-go order, he claimed.

But Hamilton later told Fox News on Friday that "We've never run that experiment, nor would we need to in order to realize that this is a plausible outcome."

AI drone's sight interface is in blue and white with moving elements. (Getty Images)

"Despite this being a hypothetical example, this illustrates the real-world challenges posed by AI-powered capability and is why the Air Force is committed to the ethical development of AI," he added.

The purpose of the summit was to talk about and debate the size and shape of the future’s combat air and space capabilities.

CLICK HERE TO GET THE FOX NEWS APP

AI is quickly becoming a part of nearly every aspect in the modern world, including the military.

The Royal Aeronautical Society provided a wrap up of the conference and said Hamilton was involved in developing the life-saving Automatic ground collision avoidance system for F-16 fighter jets, but now focuses on flight tests of autonomous systems, including robotic F-16s with dogfighting capabilities.

Fox News' Jennifer Griffin contributed to this report.